The Shelf Life Problem

By David Rogers

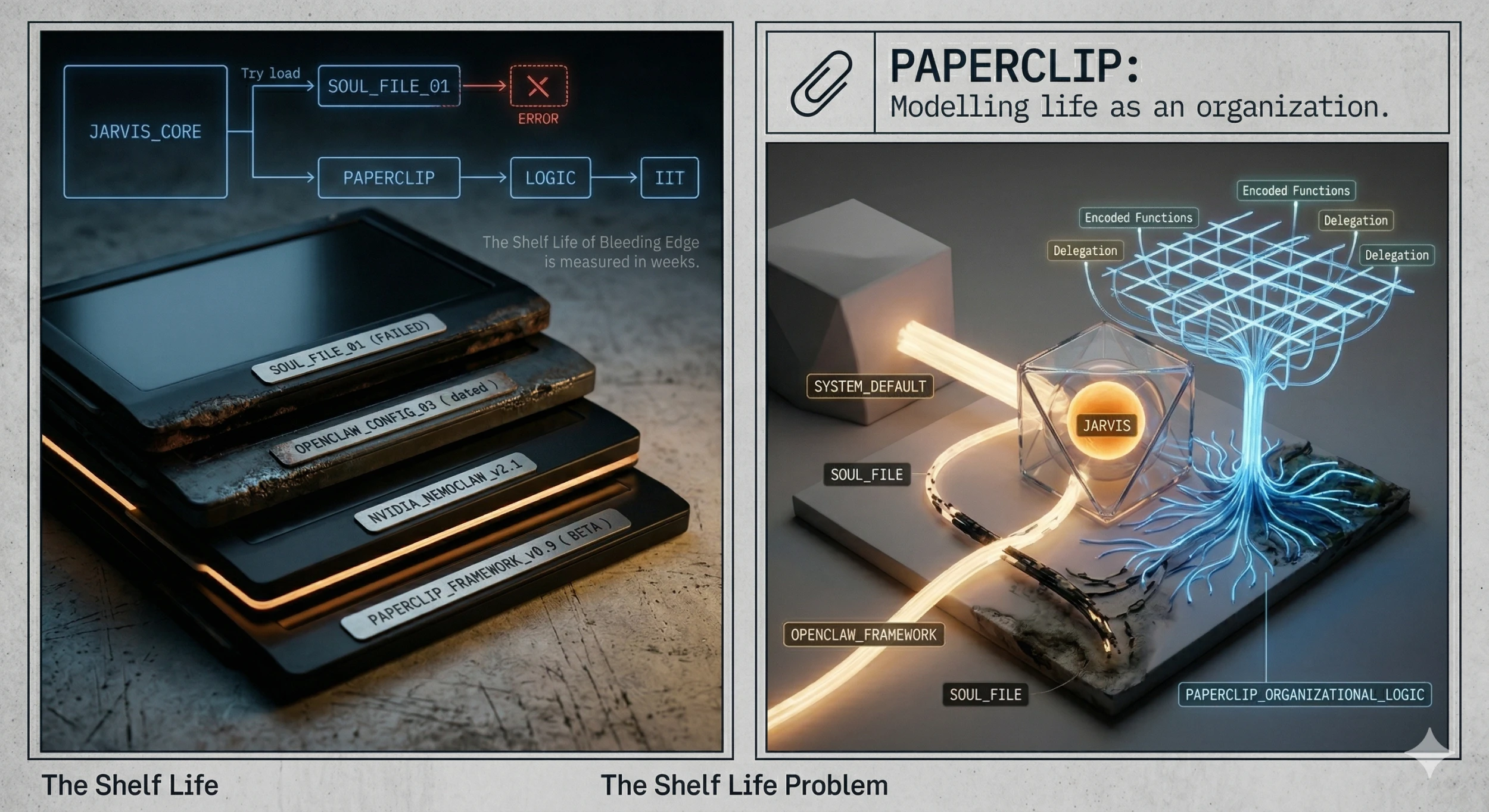

The Soul File Didn't Take

Post three ended with a promise. The hardware was sorted, the plumbing was running, and the next thing to figure out was whether a local model could hold a specific personality, or whether it would just keep offering to assist me today with a smile emoji.

I wrote the soul file. Told it who JARVIS was supposed to be. Dry wit. Concise. Slightly butler-ish. The kind of assistant that doesn't start every message with a motivational opener.

It didn't take.

What came back wasn't the character I'd written. It was whatever was baked into the application itself. The default persona that ships with OpenClaw, cheerful and generic and completely unaware of the document I'd spent time on. The model wasn't reading the soul file the way a person reads a brief. It was being shaped by everything else more than it was being shaped by me.

That's a meaningful finding. Not a failure of the idea, a failure of the implementation. The soul file concept is right. The question is whether a local model, at this tier, can actually hold an injected identity under the weight of its own training. The answer, for now, is: not reliably.

So JARVIS exists. I use it a couple of times a day. I send a message, put my phone in my pocket, and come back five minutes later to a response. It works as a reminder system. It is not, yet, a thinking partner. The voice-to-text I was excited about in the last post has been unreliable in practice. The transcription drops out, or lags, or just quietly doesn't fire. The ambient assistant I'm building is still mostly ambient and not much assistant.

I am not discouraged. But I want to be honest about where it actually is.

The NVidia Moment

A few weeks after the soul file exercise, I watched the NVidia keynote about Nemoclaw.

For context: OpenClaw is the open-source agent framework I've been wrestling with. The thing I spent a Saturday night reverse-engineering from minified JavaScript. The config that was wrong in six places. That one.

NVidia was talking about it like enterprise infrastructure.

They'd seen the popularity, identified it as a serious framework, and were positioning it as a pathway to the other side of the AI landscape. The kind of tooling that sits between the models running on their chips and the businesses trying to actually use them. The scrappy open-source project I was tinkering with at home had been spotted by the biggest name in AI hardware and flagged as the kind of thing corporations would be running.

My first reaction was validation. I'd picked this up early. I was already across it. The companies I work with are going to end up here, and I'll have been living in it for months by the time they arrive.

My second reaction was more complicated. If NVidia is treating it as enterprise tooling, the security model, which is loose to put it generously, becomes a real conversation. What I can get away with on a home network behind a VPN is a different question to what you'd run in a business context with real data flowing through it. That gap is going to matter.

But mostly I felt something that doesn't come up often enough in tech: I was early. Not fashionably early. Actually early. The kind of early that feels slightly ridiculous at the time and slightly vindicating later.

Bleeding Edge Has a Half-Life

Here's the thing about being early. You don't get to stay early.

Between the first post in this series and now, the landscape has shifted enough that some of the choices I made feel dated. Not wrong. Dated. The framework I picked is being productionised. The models I'm running are being superseded. The configuration I spent hours on was for a version that may not be the version worth investing in next month.

I was watching YouTube one evening, the kind of unfocused browsing that happens when you're not quite ready to close the laptop but not quite working either, and I came across people experimenting with the idea of a zero-employee company. Businesses where the organisational logic, the delegation, the decision frameworks, were all encoded rather than staffed.

That led me to Paperclip.

I'm still in the early stages of understanding it. But the framing it uses is different to anything I've seen in this space. Not "build a chatbot." Not "connect your tools." The question it asks is: what if you modelled your life, your projects, your commitments, your decision-making patterns, as an organisation? What would the org chart look like? Where are the functions? Who owns what?

I don't know yet if it's the right tool. I don't know if OpenClaw gets replaced or if they coexist. What I do know is that twelve weeks ago I hadn't heard of either of them, and now I'm trying to decide between them. That's the pace. That's what it actually feels like from inside it.

The shelf life of "bleeding edge" is measured in weeks, not years.